If you decide to roll your own in-house A/B testing solution, you’re going to need a way to measure how each variation in each test influences user behavior.

In my experience the best way to do this is to take advantage of a third party analytics tool and piggyback on its funnel segmentation features. This post is about how to do that.

Funnel Segmentation 101

Consider this funnel from Lean Domain Search:

- A user performs a search

- Then clicks on a search result

- Then clicks on a registration link

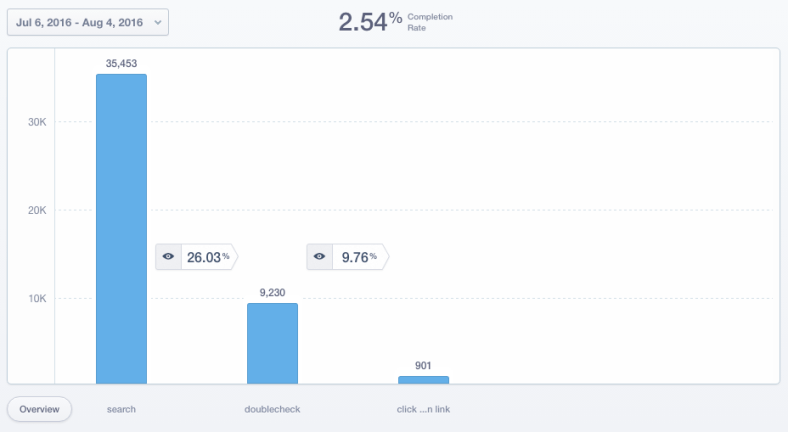

In Mixpanel, the funnel looks like this:

Of the 35K people who performed a search, 9K (26%) of them clicked on a search result, then 900 (10% who clicked, 2.5% overall) clicked a registration link.

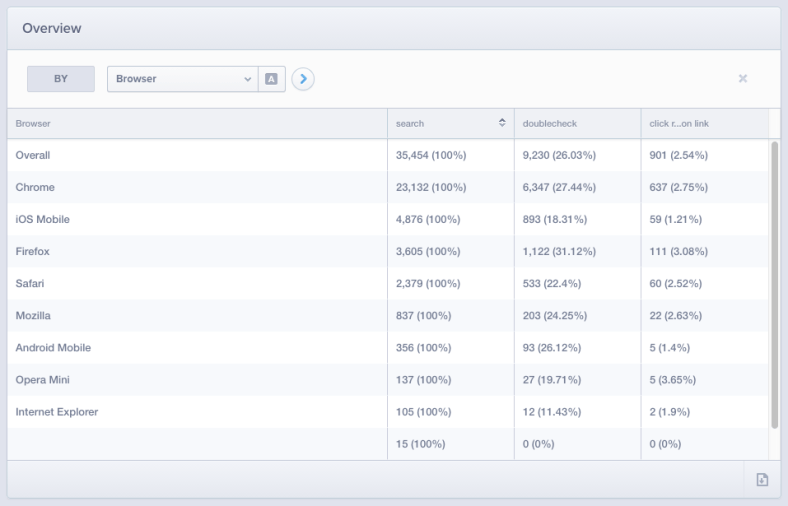

We can then use Mixpanel’s segmentation feature to segment on various properties to see how they impact the funnel. For example, here’s what segmenting on Browser looks like:

We can see that 27% of Chrome searchers click on a search result compared to only 18% of iOS Mobile visitors. We could also segment on other properties that Mixpanel’s tracking client automatically collects such as the visitor’s country, which search engine he or she came from, and most importantly for our purposes here, custom event properties.

Passing Variations as Custom Event Properties

Segmenting on a property like the visitor’s country is very similar conceptually to segmenting on which A/B test variation a user sees. In both cases we’re breaking down the funnel to see what impact the property value (each country or each variation) has on the rest of the funnel.

Consider a toy A/B test where we’re running an A/B test to measure the impact of the homepage’s background color on sign ups.

When the visitor lands on the homepage, we fire a Visited Homepage event with a abtest_variation property set to the name of the variation the user sees:

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| mixpanel.track( 'Visited Homepage', { abtest_variation: 'white' } ); |

With this in place, you can then set up a funnel such as:

- Visited Homepage

- Signed Up

Then segment on abtest_variation to see what impact each variation has on the rest of the funnel.

In the real world, you’re not going to have white hardcoded like it is in the code snippet above. You’ll want to make sure that whatever A/B test variation the user is assigned to gets passed as the variation property’s value on that tracking event.

Further improvements

The setup above should work fine for your v1, but there are several ways you can improve the setup for long term testing.

Pass the test name as an event property

I recommend also passing an abtest_name property on the event:

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| mixpanel.track( 'Visited Homepage', { abtest_name: 'homepage test 3', abtest_variation: 'white' } ); |

The advantage of this is that if you’re running back to back tests, you’ll be able to set up your funnel to ensure you’re only looking at the results of a specific test without worrying that identically-named variations from earlier tests are impacting the results (which would happen if you started a test the same day a previous test ended). The funnel would look like this:

- Visited Homepage where abtest_name = homepage test 3

- Signed Up

Then segment on abtest_variation like before to see just the results of this A/B test.

Generalize the event name

In the examples above, we’re passing the A/B test details as properties on the Visited Homepage event. If we’re running multiple tests on the site, we’d have to pass the A/B test properties on all of the relevant events.

A better way to do it is to fire a generic A/B test event name with those properties instead:

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| mixpanel.track( 'Assigned Variation', { abtest_name: 'homepage test 3', abtest_variation: 'white' } ); |

Now the funnel would look like this:

- Assigned Variation where abtest_name = homepage test 3

- Signed Up

Then segment on abtest_variation again.

To see this in action, check out Calypso’s A/B test module (more on that module in this post). When a user is assigned an A/B test variation, we fire a calypso_abtest_start event with the name and variation:

This file contains hidden or bidirectional Unicode text that may be interpreted or compiled differently than what appears below. To review, open the file in an editor that reveals hidden Unicode characters.

Learn more about bidirectional Unicode characters

| analytics.tracks.recordEvent( 'calypso_abtest_start', { abtest_name: this.experimentId, abtest_variation: variation } ); |

We can then analyze the test’s impact on other events using Tracks, our internal analytics platform.

Benefits

The nice thing about using an analytics tool to analyze an A/B test is that you can measure the test’s impact on any event even after the test has finished. For example, at first you might decide you want to measure the test’s impact on sign ups, but later decide you also want to measure the test’s impact on users visiting your support page. Doing that is as easy as setting up a new funnel. You can event measure your test’s impact on multiple steps of your funnel because that’s just another funnel.

Also, you don’t have to litter your code with lots of conversion events specific to your A/B test (like how A/Bingo does it) because you’ll probably already have analytics events set up for the core parts of your funnel.

Lastly, if your analytics provider provides an API like Mixpanel you can pull in the results of your A/B tests into an internal report where you can also add significance results and other details about the test.

If you have any questions about any of this, don’t hesitate to drop me a note.