In the last post, I explained how in mid 2007 I partnered with a talented poker player to help develop a full stacked, full ring $1/$2 No Limit Hold’em bot.

After laying out our preflop strategy and a bit of prototyping, we moved onto planning for postflop play. The strategy email below was written by him and it outlines in detail how we wanted to approach it.

While we never wound up building it, I did successfully implement many of the techniques while building a Heads Up No Limit bot several months later which I’ll detail in a future post.

If you have any questions feel free to leave a comment or shoot me an email.

###

Intro: Approaching Postflop

There are innumerable ways to determine action postflop in poker. Over time, using math, game theory, and experience, the methods that most mid-high stakes poker players use has started to become standardized. While I am quite familiar with these methods, and can describe them for the most part in detail (although this would take the space of a large book), most can’t be systemized and automated in ways directly useful to a bot. What are some other, more rigorous ways of making decisions that can replace the more intuitive aspects of mid-high pros’ processes? To answer this question, we must take a step back and analyze poker from a basic mathematical perspective.

I won’t get into the specifics of this analyzation here. I think Chen and Ankenman do a really good job of laying forth a very solid mathematical groundwork in their book. However, approaching poker from an entirely math perspective has the opposite problem of trying to copy the pros’ style: Instead of being too exclusive and intuitive, any resultant system would by impossibly hard to program because of the ridiculous level of complex programming iterations and unsolved game theory and other high level math involved.

Instead, I propose a system that uses mathematical and game theory principles on EV and hand ranges that would be impossible for a regular player to compute live, and then that we take short cuts modeled after pros’ decision making to cut tough corners and help with a lot of the evaluations. We can also use other innovative programming strategies and modules taken from other fields to solve specific problems, such as using neural nets or Bayesian statistical techniques to predict opponent actions. The trick here is first determining what will produce profitable play, and then balancing our techniques and strategies so that we can reach the final stages of this project in a reasonable amount of time. Another concern is writing our program in such a way that we can easily add, tweak, and replace things later on.

This document will contain three sections. First, I will describe an idealized form of the program and the decisions involved in making it, relying mostly on math and game theory, without getting into a lot of specifics regarding the inner workings. Next, I will describe a much more realistic system, replacing a lot of the difficult generalizations of the first section with more realistic methods and strategies. Included in this will be details about some of the specific strategies and methods employed. Lastly, I will give a conclusion detailing what I see as the biggest problems and the most efficient ways to go about solving them, and a rough timeline for this project.

Section 1: A Naïve Plan

The purpose of this section is to provide a frame of reference while finalizing our actual system. As problems arise, new methods are discovered, and our actual system shifts, it is important to have something to look back on to help keep perspective. That is not to say however that what is written here is final… I will try to use a level of abstraction that is both useful to planning future changes and is still unlikely to change, but this is a line drawn subjectively in sand.

There are a number of more abstract questions that need to be answered before we can move on to actual systems. The only one I will go into any depth here is the distinction between exploitive play and optimal play. Generally, good players focus on exploitive play while most bots and math focus on unexploitable play. There are of course many exceptions, and lines can often be blurred in real application. Here are some quick arguments pertaining to exploitive vs. unexploitable as it relates to this project:

For unexploitable:

-Every time we try to exploit someone, we are opening ourselves up to exploitation. In normal play by professionals, this isn’t a large problem as we can counter-counter adjust to the counter adjustments. But modeling this in actual play with code is difficult to say the least. Making agile adjustments to game flow is something that humans will almost always have an edge in. As a bot, if we play an exploitive system, it is important to write a lot of protections in so that we are not consistently exploited for our attempts to exploit. These protections are potentially complex and take away from our time working on the more important nuts and bolts.

-If we could describe an unexploitable system, we would be a large winner at all levels regardless of opponents. We would not have to worry about all the levels of complexity involved in an exploitive system (figuring out opponent weakness, adjusting, counter adjusting, etc.).

For exploitive:

-We have access to a ton of information about our opponents’ styles, and can do complex sorting and statistical tests of this data to get information applicable to specific hands not available to players at the table. This makes it easier for us to exploit.

-The players we play against will be poor and exploitable on average. All but the best players at 2/4 will likely have serious leaks. Exploiting these leaks will be much much more profitable than playing unexploitably. Also, players at these levels will often have limited knowledge about how to take advantage of the adjustments we make.

-If we could play either style perfectly, exploitive will always make us substantially more money. The perfect exploitive strategy is THE perfect strategy.

-No one knows how to play unexploitably, but we do know how to exploit people. Describing an unexploitable system has some practical problems, and often it is easier to just react to opponent tendencies.

We will need to solve this problem independently for many individual situations. Right now, I think our basic strategy will should be thus: We write code that is mathematically exploitive of our opponents play characteristics, and hope to do a good enough job that when other players are playing unexploitably, we also tend towards unexploitable play. We start with the assumption that all others play somewhat unexploitably, and cautiously adjust our play to more exploitive lines as we receive evidence of opponent mistakes. This is an important concept that we will use a lot in section 2: using our opponents to balance our play.

Our system:

We will make all postflop decisions by evaluating the expected values of the various lines we can take, accounting for as many factors and possible future situations as we can. What follows are some of the root aspects and factors involved in this system.

Hand reading. We will read hands by assigning our opponents to a weighted range based on their preflop actions. Every time they act postflop, we will determine what hands they are likely to execute that specific action with, given that player’s play characteristics, and use Bayesian methods to figure out their new likely weighted range given their previous range and their most recent action. We will try to be extremely careful with this process, and will spend significant time making sure that we are effective in getting accurate ranges with cushions for errors. A lot of our overall profitability depends on our ability to hand read, as we will use these hand ranges for multiple purposes.

Predicting future actions. There are a number of different ways this is possible. We can use our opponents’ range of hands and play characteristics combined with future possible cards. If we are less confident in our abilities to do this in an exhaustive fashion, we could use pure statistics of what our opponent has done when faced with similar situations in the past, or what players like him have done for lower samples. Another option is using databases to train neural networks in various ways to predict actions. We will use these predictions primarily to figure out the differing total EV of different lines, in conjunction with other things on this list.

Showdown equity. We can use the weighted range of opponents hand and future cards to guess our equity in the pot. Showdown equity, or SdE as I will refer to it, is our equity in the pot against our opponent’s range if we were to be all in on this street.

Extraneous equity. I will refer to this as ExE. It is the equity in the hand that is caused from factors outside our showdown equity, usually by how the hand is likely to play out. Our ExE will always equal the negative of our cumulative opponents’ ExE. ExE will usually play a larger roll than SdE in our decision making, especially on the flop. Determining ExE is an uncertain (we are forced to repeatedly guess) and involved process.

The final decision making equations. We will figure out the EV for each of our various possible lines, modeling future streets for possible cards and combining SdE with ExE. For any set of possibilities, our total EV of the line that leads to those possibilities is the sum of the equity of each possible outcome times the probability of that outcome happening. The probabilities should always sum to one.

Thus concludes the overview of our general postflop plan. There are a lot of things left in vague terms and plans that call for impossible or near impossible complexity. For example, how are we supposed to write an EV calculation for every turn and river card and action given a certain flop situation? This is called for above but would require writing out millions of functions by hand, which is obviously not going to happen. Coming up with innovative solutions for the problems associated with the above summary is perhaps our most important task.

Section 2: A first attempt

While I had much of the previous parts of this document written in my head before I contacted you the first time, this section will start to drift into newer territory. While some aspects of the system outlined below will be well thought out and are likely to remain intact in their described form throughout the project, others will be stopgaps or untested technology hopefully to be replaced and refined at a later point.

Please keep in perspective what this whole document is: a preliminary report on our approach to postflop, drafted in without consultation in a days. This is not a specific and complete plan, but it is a plan regardless. Because the purpose of this plan is to shed light on the different aspects of postflop play we need to work on, and to act as a survey of options available to us, to further these goals I will be adding commentary in blue outside the plan and different possible methods in red, and an overall discussion section at the end.

A system:

Here goes. It’s pretty dense, and not as well written as it could be, so it might take a few passes to decipher. I recommend reading everything that follows a few times (except maybe this next part which we have already covered), and giving comments the last time you read it.

First opponent hand ranging. We will start by obtaining a weighted range of hands from preflop actions. This will be accomplished first by determining the percent of all possible hands that this player would do his preflop actions with. Next, we will refer to a predrafted list ranking the ordered likelihood of each hand for that action. As many of these will be drafted as is seen fit, but the final number will likely be 4-8. A general probability density function will be created for various percents of total hands for each list. We will use the density function, the list, and the percent of hands to come up with a real probability density function of our opponent’s range which fits the percent.

This description is vague, but as you already have a more complete explanation, I don’t feel I need to go into detail here. Frankly, I think this system will work alright but not great. I think there are some innovative improvements that are possible, but I don’t see any other substantially different way to do what we need to do. I think the biggest problems with this system are the oversimplification of having only 4-8 lists, and the course way in which the distributions are fitted. Strength of this system include how easy it is to tweak and modify and how straight forward it will be in implementing (we already have a working sample algorithm for a distribution function).

Possible improvements include a dynamic system of creating lists for each situation and each player, and dynamic methods of creating functions for determining the final distribution based on specific players and situations. For example, someone we viewed as a straight forward player (we could measure this by how much they play fit or fold on the flop and a few other things, for example) we could create a really value based list for their raises and the more confident we were in the read, the more the function would be towards the top (i.e., there would be more hands with 100% and bad hands would all have 0 weight).

Another possible method here is adapting our preflop program so that we could input opponent preflop statistics and card values and “my variables” will be changed such that someone using this preflop system would get stats similar to our opponent’s specific stats. Then we would run this program for preflop from their perspective and use this to get a hand range. The big positive about this system is that it could end up with much more accurate ranges than are possible any other way, but the big negative is that it would be complex (time consuming), possibly resource intensive, and hard to write.

General opponent hand ranging. Every time someone does an action throughout the hand or hypothetically (see later), this section will be activated and the opponent will be given a new hand range. This will be done by putting previous opponent hand ranges through a distribution transformation algorithm (DTA). A new DTA will be created for each situation.

To construct our DTA, if our opponent raised or bet, we will determine the hands our opponent would do this with for value, based on the board, the percent of time we predict he bets or raises here based on our neural net (described later), our current range strength (defined below) and opponent tendencies. We would then determine what types of semibluffing hands our opponent can have, and determine the likelihood of each given he raised or bet based on the percentage of his range that are draws, the strength of those draws, the percent of time we predict he bets or raises here, and the strength of our own range. We will then determine the likelihood he bluffs outright, based on the differences in SdE between our two ranges, and how often he bets here.

We will then take this new range consisting of value bets (all hands above the vb cutoff for immediate showdown value), semi bluffs, and bluffs, and normalize it based on the percent of times we think our opponent will take this action in this spot. We will obtain this percent through neural nets.

Our calling process will be very similar, except we will break things down into calling for value and floating. Value will make up a much large part of opponent ranges on average. We will determine which hands our opponent will call for value with based on our estimated percent chance he will call, the strength of our own range after making the bet, and his previous hand range.

When our opponent checks, we will similarly use how often he checks in this spot with the types of hands he is most likely to check with, and thus obtain our distribution.

For every time we use a DTA, after we determine which hands are likely, but before normalization occurs, we will “blur” the cut offs of hands that would be folded/raised/called. This blurring will be a gradating of percents based on the error of our neural net (described below). Another way you could describe this blurring is gradating, or smoothing, out the graph of the resultant transformation function. For example, say his range consists of (1 is fully part of his range and .5 is half discounted, not accounting for number of combinations possible) 1AA, 1KK, .8QQ, .5JJ before he called, and we estimate he will call 50% with the cutoff being at KK, and we have very low error from our neural net here, and there is a king in our hand. We take 50% (the percent he calls) of the total number of combinations present in the original distribution, which is 1(6)+1(3)+.8(6)+.5(6)=16.8. So our new distribution should be equal to 8.4 combinations and filled from the top down. This would result in 1AA (6 combos) and .8KK (2.4 combos). But if we are unsure about our read on his calling range, to account for possible errors, we don’t only fill top down but blur the line of the calling range. Our end result will still equal 8.4 combos, but we will include more hands. If we were somewhat unsure our range might equal .8AA+.6KK+.3QQ. If we were completely unsure of our ability to predict, the range would remain unchanged (it would be normalized down, so all discount numbers would be multiplied by the percent, but this wouldn’t have any effect on the relative probability of our opponent having each hand). We will very rarely be completely sure or unsure, and will usually use at least some blurring. Note that blurring can also come from other things besides neural net uncertainty, but the ratio of hands in a given distribution which are discounted, and the degree of these discounts, still always acts as a measure of how unsure we are of their range.

As you will see later, this process needs to be fast, as it will happen a lot of times each decision as we estimate the EV of hypothetical situations. There are a lot of details I left off here, most because I haven’t thought about them and some because I felt it tedious to list them all. An example of something I oversimplified is suites need to be differentiated, but were left out of the example. One aspect of this system which could be positive or negative is that it is heavily reliant on the abilities of our neural net to predict.

There are 2 main alternatives to this system. The first is the bayes net you have been working on which to be honest I still don’t understand well. The other system would work roughly like this: Before each opponent acts, we will figure out what our opponent is likely to do with each of the hands in his range. Then, after he acts, we will figure out his new range using Bayesian statistics using the likelihood of each hand to begin and the likelihood he would execute that action with each hand, given his action. This is a very open ended system and quite similar to the one described in black above. The key to it is the first step, determining what he is likely to do with each hand in his range.

Initial self hand ranging. We create a probability density function of our own hand range by running every possible hand through the preflop program.

Hopefully this won’t be too computationally time consuming. We could have this running in the background while preflop is going on and it isn’t our turn to act.

General self hand ranging. We will run through the hand ranging system we built for our opponents, using our own stats and previous range.

I was going to go into more detail here but it doesn’t seem needed. Hopefully you can see how this would work and I’m not missing any major problem we could have with it. Not that there won’t be problems, just that we can overcome them.

A small problem arises each time we use our own hand range for anything: We are assuming our opponent is as good at reading our hand as our system is, which is not going to be true. Mid stakes pros will certainly be better and low stakes fish will certainly be much worse. Determining where we are on this scale and adjusting for it is a serious issue, but one that we should wait to address in full until we have a better idea how good this system will be at guessing our range. Nevertheless, certain fixes will be built into uses of our range for times where fish will have no clue what our range is. In general, determining our own range isn’t very important against major fish and every time we are doing it we are not allowing ourselves to maximally exploit fish.

A method that would produce perfectly accurate hand ranges would be to run through our entire decision making process with each hand in our range, and use that to find our new range. This would be extremely simple to implement, but would likely cause serious computational time issues.

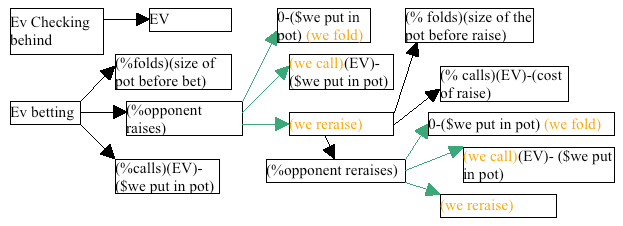

EV trees and determining action. We will take the action with the highest EV. The function for EV of an action is the probability each result of that action will take place times the total EV resulting from that action taking place. This has a branching effect, as in order to determine the EV of immediate possibilities we have to break it down into each later possibility, and this creates a nearly limitless function tree. Because this will be too complex for us to carry out in real time over all streets (trying to calculate the EV of each possible outcome for each possible turn and river card would be too resource intensive), we will always estimate explicitly all of the flop actions, and then use an estimator function for all turn and river possibilities for each ending branch of the whole flop action tree.

Each time it is our turn to act on each street after our first time, we will already know our action. This is because while evaluating this tree for the first time, we need to know the “probability” we will execute each 2nd or 3rd action because this determines the EV of this course. Thus we will always solve for the highest EV options down the road and determine conditional probabilities of 1 for the choices we would make. Each time we need to make a hypothetical choice, new hand reading will be done for hypothetical ranges at that point, and these new hand ranges will be used in all further actions down this path (and will possibly be modified hypothetically again).

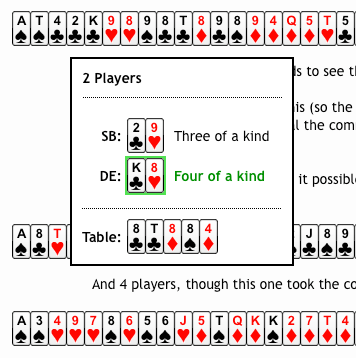

Because this is somewhat complicated, here is an example to illustrate.

Every box that is a dead end will be calculated first, and these will be used to calculate the boxes before them. Each end box will have different calculations in the part labeled “EV” on the graph (which is really not the whole EV in every box it is in, I just needed to call it something on the graph), but these will be standardized and an algorithm will be written to determine each specific equity equation. The trees will not extend past this street. Estimations will be used to look at ExE on future streets from the point we are at in the tree and this will be combined with pot size and SdE to give the “EV” values.

In this example, we raised preflop and got called by the big blind. He checks the flop. We now need to determine if checking or betting has high EV in this spot. Each probability is conditional and dependant on all probabilities of paths leading to it, and is determined by our neural network discussed later, unless the probability is our choice in which case it will be 1 or 0 depending on which branch has the highest EV. Our choices are shown by lines in green and words in orange. There is one last unfinished choice (the last (we raise)), who’s branches were left undone because of space limitations. But this brings up an important point…

When we can’t finish the equation in a reasonable amount of time. When it isn’t possible to complete trees because there are too many raises possible (deep stacked unraised pot), ranges are really wide, or the pot is multiway, or when we are going to take too long for any reason, we will write code that will quickly estimate and finish off sections of trees. We will write a time estimator function that will run beforehand and also will rank branches based on importance, which will be based on EV affect times probability, and thus we will determine which branches to estimate and which to complete.

I left off descriptions of how to write these estimating functions because I want to have a better idea of what situations I need to cover when I write these. This estimator function could be written in a number of ways, but it will probably be at least somewhat time consuming. This section will be similar to the ExE section.

So there you have it. This is the real heart of this system. Two quick notes: First, you might want to come back and read this after you have read the later parts and understand the whole system. Second, I wrote it originally for flop play and went back and converted it to all play, so this could be a cause of errors. Anyways…

I think this is about the only way that really makes sense. All other systems I imagine us using would either be too complicated to program or wouldn’t be advanced or scaleable enough. The biggest limiter here is computation power. I really am not sure about what things we can and can’t do in a reasonable amount of time. I know in the past I’ve way underestimated on these things, and so you need to read this whole document and understand everything and then tell me what we can and can’t do in a reasonable amount of time. My conservative guesses are the guideline I have written out here, and I could really use a more experienced view. If I have overestimated but not by a lot, and we can’t get the time down by writing better algorithms, it might be worth looking into buying a fast computer.

This system’s success will depend primarily on 2 later sections, which are really just extensions of this section: the predicting probabilities section, which needs to be good, as a few points in deviation will often decide action, and the ExE section which will quantify how much of our SdE equity can be retrieved as real equity, and how much we can outplay opponents. If we somehow fail in one of these other two sections, we will need a new system.

As I see it, the level of complexity we implement is the only real variable in the above. There are two issues with this: computation power, and amount of time programming, when considering possible outcomes especially in regards to the turn and river. There are some times, like heads up when the pot is largish relative to effective stack size, and thus the equity calcs are all really short, where we could probably do some later street calculations for different cards and possibilities. But this would have to be written separate from everything else, and might be tough for the flop because you also have to consider river possibilities which could be too complex to model in the same way, and so maybe would only be worth adding on after the bot was already working. However, adding some of this functionality to later streets should be our major priority after this bot is working, if we haven’t already done it.

If things are taking too long and we can’t make it more efficient, there are some simple things we could adjust that would be only slightly worse but much quicker, as is discussed in most of those sections.

The other options would be simpler “cheating” systems that use clever generalizations to arrive at correct play (this also describes this current system, but at least it does some ev calcs). One example would be a system that would (in a nutshell) find the proper bet percent by using opponent call, fold, raise, check raise, etc. percents, and adjusting for some things like flop texture and stack size, and then rank our hand based on where it is in our range, and then decide action based on which percent on the list we ended up on. Then we would use a system similar to the “section 2” one above but only for the turn and river where there are less branching variables and stack sizes are higher relative to the pot usually. This is actually my original flop system before I contacted you, and I think with some other clever adjustments that I won’t go into here, it would play pretty well without using many comp resources.

Dealing with different bet sizes. The above chart is also overly simplistic because we do not account for different bet sizes. In the actual program, we will break our own choices down into 2-4 different bet sizes depending mostly on stack sizes, pot size, board texture, and the street we are on. For each opponent decision, we will evaluate for a number of rounded raise sizes, using the likelihood of each and the difference in likely EV as the biggest factors in HOW we break it up. The NUMBER of pieces we break it into will be determined by computation time considerations.

This is something that will exponentially affect computation times with that won’t affect our results much at higher levels, but we need to maintain at least a base of 2 for us and like 3 (pref 4 cause we always need all in) for them. It might seem like a huge simplification to only have 2 bet sizes (and 1 for most flops), but really when I play I use 2 95-98% of the time, not including the river, which we can probably increase just for that because of a smaller tree. Having less bet sizes is a great way to hide information in our play, something I will talk about later.

Two other things worth noting here: I really want to read the section in math of poker for bet sizing, but haven’t yet, and I might change my ideas based on some content. The other thing is that I need to know what is computationally possible before I write this in, because programming for 4 sizes will be much different than for 2. Keep in mind that for each extra size, we are creating an exponential amount more of new DTAs, nn run-throughs, comparative and relative range and equity calculations, etc.

Calculating EV. Because we don’t have to worry about probability, and because adjustments for contributions are already made in the EV tree part, we only have to worry about three main components, set forth in this equation…

EV=(pot size)(SdE+ExE)

Pot size is simple, and SdE is just our equity if we were all in at this point. ExE is much more complicated. Here are SOME of the factors that will be involved in ExE equations. For all these things I will write functions in general form that can use all the real or hypothetical data we have, and combine it in a set of functions with subjective weights based on certain factors.

-Generally, how likely we are to get paid off when we are ahead and how likely we are to get our opponent to fold when we are not. An equation for this could consist of a measure of the how many second best hands the opponent is likely to make, and how easily we can value bet (how many hands does he have that are better, will we need to fold if raised). This will be added to a special neural net trained to determine later bluff equities. This neural network will be as complex as any of our others. It will involve a large number of factors and will be based on how often our opponent folds on next street(s) given the action on the street so far and other info. If for some reason it isn’t feasible, we could use some quick general stats like fold to next street bet %s.

-Knowledge of our opponent and specifically which mistakes he makes, their severity, and how often he makes them. This is a more nebulous assignment, which I will work on specifying later. I have some ideas though.

-How much of our SdE is coming from draws and the quality (i.e. strength of the hand that we are drawing to) of those draws, compared to effective stack size(s). An algorithm can be written for this. It will be a pain to do perfectly though.

-Having a mixed range of draws and made hands in our range will increase our ExE for all hands. It should be easy to write an algorithm to determine how many draws (of a certain minimum quality) are in our range versus how many made hands

-How much of our opponents’ SdE come from draws, and the quality of those draws. Uses the algorithm above

-Considering how this hand lies in our own range, the lower the hand’s value is in our range relatively, the higher its ExE

– Position

-Generally, the narrower our range the lower our ExE. These last three will be easy to do but hard to weight

-Knowledge of our own limitations as a program, and knowing how mistakes we make or shortcuts we take cost us in specific situations (one likely example of this might be difficulties in accurately modeling correct play in multi-way pots). This one will be tough, and we will need to do it after the rest of the program is tested and working.

We will combine these by just summing their individual importance. Our ExE will always be expressed as a real number, usually a decimal, showing importance relative to SdE, and should average 0 against players we are even against, negative against better players, and positive against worse players. However, our situation (cards, position, stack size, etc.) will usually have a larger impact than the players we are playing against. Also not that these values will be computed differently for each street.

This is something I will work on after you leave, as it is more of a poker issue than a programming issue. I would like to hear your input on how I approach this, however, if you have any. One thing I WILL be sure to do is heavily document my approach to dealing with weightings and all subject variables, and show my work with explanations on all math I do (different than how I did preflop).

Short of just fully calcing all streets, I don’t see what else we could do that differently for this.

One good but difficult change we could make for this is to fully integrate the “When we can’t finish…” section with this section and the tree section. The resulting process would go something like, calc each immediate possible outcome, then do the ExE section ignoring street, just using later action (it would have to be partially rewritten), then if the result with the probability is “important” enough then we would toss this estimation out the window and instead go one level deeper, for all the next possible actions (whether its on this street or next) and estimate from that point and repeat the process. We would have to build a “barrier” so that we only jump to next street action when it is very important, as we have to predict action for each new card in these cases and it will thus take a lot of time. We would continue this until some set time has passed or till we figure relevant paths out to our satisfaction.

This was written rather densely but I think is an important idea, so I’ll explain it better if this isn’t enough. Even though this is more complicated than the system described in black, I actually thought of it first, and decided it was too complicated to write out in this document, so I simplified everything. If you don’t think computation problems and difficulty with the required dependant algorithms will be too much, or if they WILL be too much (in which case we would throw out the later street part) then I want to write this section instead of what’s written in black. Maybe we should write it like this anyways.

Probabilities will all be taken from six large and important neural nets. We will have two nets per street; one of these nets will focus on when play is heads up going into that street and the other will focus on pots that are multiway going into that street. These nets will be trained using roughly a few hundred total factors. Here is a very rough breakdown of some of the factors involved.

-Factors describing the basic action preflop and position and stack sizes(~20)

-Factors describing every action so far postflop (with bet sizes)(~20-50)

-Factors describing board texture, especially last card on turn and river (~15-35)

-Stats describing our opponents’ play style(s) (~25-60)

-Stats describing our opponents’ opponents, including us when we are playing against them (~25-60)

-Various metrics on all hand ranges describing their make ups and how they relate to others and themselves. A lot of this will be taken from the ExE section. (~25-60 factors) This section of metrics might be pretty resource intensive, so keep that in mind. Every time a probability is grabbed, which can be hundreds of times each decision, if we are doing multiple algorithms for each hand in each players’ range, this could end up at many thousands of algorithms for each decision. There are obvious ways we could limit this without giving up much, like writing non-rigorous algorithms in the ExE section.

Error will be determined by using the average success of this particular net with similar factors used (this is doable right?), and the success of the last ~25 non hypothetical predictions we made against this player.

So I’ve decided breaking it up into six was best, as people act so differently heads up and since they each have like 25-100 different variables, it would be better to split them.

One big problem with using neural nets, especially against good players is that it’s going to be hard to factor in our own play styles. I wrote in “opponent statistics” as a category, but there are some issues that might come up. Frankly, I don’t know how this will turn out.

Another important thing to consider along with the above, and this applies to all sections not just predictions, is that because this whole postflop system is more advanced and less organic than the one I had envisioned, it might create a play style that is pretty divergent from most “normal” tags. Many players at medium stakes fold a very exploitable amount, and this might cause us to go somewhat apeshit on them (raise raise raise raise). This will be great at first, but as they play with us more and start adjusting, we are going to have to write something in to counter this in the short run (we would have trouble adjusting to adjustments naturally because it will take a while for the new opponent stats to show up, especially if we have a lot of hands on them). This whole “adjustments by good players” issue could potentially cause us a lot of problems, but there are solutions to them. I don’t want to write fixes for this yet though because a) we are going to start at lower limits where players will be much worse at adjusting to us and b) I’m not really sure what our style will look like yet, and I need to know how we play to know how our opponents will react to us.

A quick tangent to the above: because I decided to go in a more inorganic direction, choosing EV calcs over pros’ thought processes, our play might be noticeably inorganic. This could raise suspicion if we play a lot, but I’m not too worried about it, and if our style is too divergent, we could always right some adjustments that should solve the problem.

Getting back to the neural nets: we need to remember we are using this for a large amount of things, and have no secondary protection worked out. One thing that relies completely on our NN is our raise sizing. We might need to go back and write some separate raise sizing algorithms (this would also greatly slim our action tree) if the NN isn’t able to adjust well to a few changing values. There are probably other circumstances similar to this one that might need separate algorithms.

How good these neural networks are determines a big chunk of our profit. It is one of the four big ifs: IF the DTAs with initial ranging are pretty accurate, IF the ExE section is well written, IF we don’t run into major computation time problems, and IF we can accurately predict opponent frequencies, I think we will be able to beat middle games better than all but the best (how does $72/100 hands sound?). Obviously, if we fall short, we still have some cushion to work with.

Conclusion

The above plan is optimistic. There are a lot of interconnecting variables, and if one of these is off, or they are all by a bit, we might get screwy results. There aren’t a lot of protections built in. If we make a mistake, often we won’t just fail to exploit, we ourselves will play a losing style. Generally, the more dependant aspects of a system are on each other, the more potential the system has, but the more affected it is by mistakes. So if we want to protect ourselves, the way to do it would be to isolate some of the sections from each other and use less exploiting processes and more focus on playing a certain style in certain “isolated” situations.

This is certainly something to think about. I thought a lot about each aspect of the above plan, but postflop NLHE play isn’t something that is usually described in 8 pages (a lot less without comments).

There is a lot of red and blue text above. This underlines that we are in an extremely open ended situation right now. Building this from the ground up allows us an insane amount of flexibility, and given the nature of this project, we should try not to “settle” on anything. If there is an aspect we don’t like, we need to fix it before code is written and time is wasted. I feel the design of postflop is the most important part of this project, and is all we should focus on this week. I want to have you here during the most crucial parts because you understand the technology and programming much better than me, and it is also good to have another poker mind checking my work.

I was going to write out a plan of attack here, but I want to read your comments on each part, and do some more in depth reviews of other possible technology you have in mind like Bayesian networks before we allocate the time we have this week. Nevertheless, I will give a very naïve time line.

-Work on outline of postflop plan, review options and choices (this week)

-Hammer out some of the details and write a more in depth plan (next week)

-Write out the algorithms, thought testing and error checking as I go (3-5 weeks?)

-Error check and edit the whole thing, write new code if needed (1-2 weeks)

-Finish preflop if you aren’t back yet, if you are, do this with below (1-2 weeks)

-Work with you on neural nets, hand parsing, and other misc. tech (2-5 weeks?)

-Work with you on programming postflop (1-2 weeks?)

-Concurrently play a good amount of poker to get back in touch with games (n/a)

-Bug finding, troubleshooting, polishing (0-2 weeks)

-Work with you on speeding up algorithms and program in general (0-2 weeks)

-Prelim play tests and optimization (1-2 weeks)

-Setting up to play online (0-1 weeks?)

-Sweating sessions in real online games, more optimization (1-4 weeks)

-Unleash the monster, while working on more add-ons, optimization, fixes, etc.

So adding up the times, I guess a minimum of 12 more weeks and a max of 30 weeks. Longer than I thought, but I think a lot of these guesses are pretty conservative.

All in all, I still feel as optimistic as I ever have about this project. I am confident that even if a large number of the aspects of the plan I wrote above don’t work out, we can find acceptable alternate methods. Tell me what you think!