Background

Backpropagation is a common method for training a neural network. There is no shortage of papers online that attempt to explain how backpropagation works, but few that include an example with actual numbers. This post is my attempt to explain how it works with a concrete example that folks can compare their own calculations to in order to ensure they understand backpropagation correctly.

Backpropagation in Python

You can play around with a Python script that I wrote that implements the backpropagation algorithm in this Github repo.

Continue learning with Emergent Mind

If you find this tutorial useful and want to continue learning about AI/ML, I encourage you to check out Emergent Mind, a new website I’m working on that uses GPT-4 to surface and explain cutting-edge AI/ML papers:

In time, I hope to use AI to explain complex AI/ML topics on Emergent Mind in a style similar to what you’ll find in the tutorial below.

Now, on with the backpropagation tutorial…

Overview

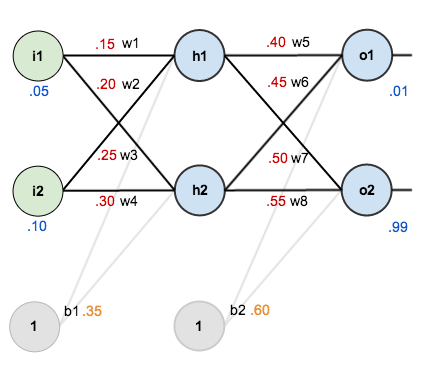

For this tutorial, we’re going to use a neural network with two inputs, two hidden neurons, two output neurons. Additionally, the hidden and output neurons will include a bias.

Here’s the basic structure:

In order to have some numbers to work with, here are the initial weights, the biases, and training inputs/outputs:

The goal of backpropagation is to optimize the weights so that the neural network can learn how to correctly map arbitrary inputs to outputs.

For the rest of this tutorial we’re going to work with a single training set: given inputs 0.05 and 0.10, we want the neural network to output 0.01 and 0.99.

The Forward Pass

To begin, lets see what the neural network currently predicts given the weights and biases above and inputs of 0.05 and 0.10. To do this we’ll feed those inputs forward though the network.

We figure out the total net input to each hidden layer neuron, squash the total net input using an activation function (here we use the logistic function), then repeat the process with the output layer neurons.

Here’s how we calculate the total net input for :

We then squash it using the logistic function to get the output of :

Carrying out the same process for we get:

We repeat this process for the output layer neurons, using the output from the hidden layer neurons as inputs.

Here’s the output for :

And carrying out the same process for we get:

Calculating the Total Error

We can now calculate the error for each output neuron using the squared error function and sum them to get the total error:

For example, the target output for is 0.01 but the neural network output 0.75136507, therefore its error is:

Repeating this process for (remembering that the target is 0.99) we get:

The total error for the neural network is the sum of these errors:

The Backwards Pass

Our goal with backpropagation is to update each of the weights in the network so that they cause the actual output to be closer the target output, thereby minimizing the error for each output neuron and the network as a whole.

Output Layer

Consider . We want to know how much a change in

affects the total error, aka

.

By applying the chain rule we know that:

Visually, here’s what we’re doing:

We need to figure out each piece in this equation.

First, how much does the total error change with respect to the output?

is sometimes expressed as

When we take the partial derivative of the total error with respect to , the quantity

becomes zero because

does not affect it which means we’re taking the derivative of a constant which is zero.

Next, how much does the output of change with respect to its total net input?

The partial derivative of the logistic function is the output multiplied by 1 minus the output:

Finally, how much does the total net input of change with respect to

?

Putting it all together:

You’ll often see this calculation combined in the form of the delta rule:

Alternatively, we have and

which can be written as

, aka

(the Greek letter delta) aka the node delta. We can use this to rewrite the calculation above:

Therefore:

Some sources extract the negative sign from so it would be written as:

To decrease the error, we then subtract this value from the current weight (optionally multiplied by some learning rate, eta, which we’ll set to 0.5):

We can repeat this process to get the new weights ,

, and

:

We perform the actual updates in the neural network after we have the new weights leading into the hidden layer neurons (ie, we use the original weights, not the updated weights, when we continue the backpropagation algorithm below).

Hidden Layer

Next, we’ll continue the backwards pass by calculating new values for ,

,

, and

.

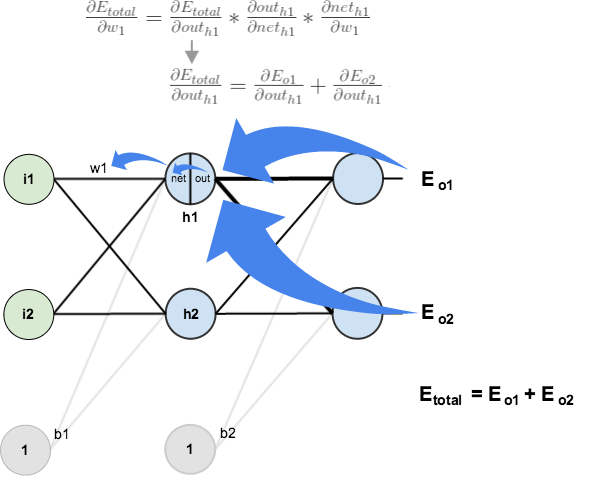

Big picture, here’s what we need to figure out:

Visually:

We’re going to use a similar process as we did for the output layer, but slightly different to account for the fact that the output of each hidden layer neuron contributes to the output (and therefore error) of multiple output neurons. We know that affects both

and

therefore the

needs to take into consideration its effect on the both output neurons:

Starting with :

We can calculate using values we calculated earlier:

And is equal to

:

Plugging them in:

Following the same process for , we get:

Therefore:

Now that we have , we need to figure out

and then

for each weight:

We calculate the partial derivative of the total net input to with respect to

the same as we did for the output neuron:

Putting it all together:

You might also see this written as:

We can now update :

Repeating this for ,

, and

Finally, we’ve updated all of our weights! When we fed forward the 0.05 and 0.1 inputs originally, the error on the network was 0.298371109. After this first round of backpropagation, the total error is now down to 0.291027924. It might not seem like much, but after repeating this process 10,000 times, for example, the error plummets to 0.0000351085. At this point, when we feed forward 0.05 and 0.1, the two outputs neurons generate 0.015912196 (vs 0.01 target) and 0.984065734 (vs 0.99 target).

If you’ve made it this far and found any errors in any of the above or can think of any ways to make it clearer for future readers, don’t hesitate to drop me a note. Thanks!

And while I have you…

Again, if you liked this tutorial, please check out Emergent Mind, a site I’m building with an end goal of explaining AI/ML concepts in a similar style as this post. Feedback very much welcome!

This example single handedly taught me how to actually make a neural network. My one question though pertains to the weights of the bias for each layer.

Are the bias’ and the bias weights supposed to remain unchanged, or are the weights adjusted through back propagation just as any other?

Hi,

Could you please explain why the biases are not get updated?

That’s because the biases are threshold values that are by default set as constant (Their values are governed, depending on the application of NN, by one of the following : business logic, scientific fact such as 273Kelvin or 3*10^8m/s etc.)

Trying to understand why a multiplication with -1 in the dE(total)/dOut(o1).

if f(x) = a*x^r then

df(x) = r*a*x^(r-1)

in this particular case

f(x) = 1/2 * x ^ 2

df(x) = 2 * 1/2 * x ^ (2-1) = x

So dE(total)/dOut(o1) should be = target-actual

However, you are multiplying by -1 which makes it actual – target.

Where does the -1 come from?

never mind, I just realized the x = actual and not target-actual so the -1 is (0-actual). It was just not very intuitive the way you wrote it. Maybe it’s worth adding an explanation…

I agree, I was tripped up by this and am still having trouble with it. Vic, could you give a further example of how you understood it?

You must remind chain rule for derivatives.

f'(x) = f'(x)x’

could you explain it, I still dont know wheres does -1 come from :/

ow ok, d(0.1) – d(out1) = 0 – 1

Thank you so much. This helped tremendously!

Great post! Thank you. I got a lot of insight on how backpropagation works.

Excellent explanation

This is excellent explanation.

Thank you, you helped me a lot.

I was working with Neural Networks – A Comprehensive Foundation by Simon Haykin. But Matt explanations are easier to work with.

Fantastic. Greatly appreciated

Great explanation deserves spreading!

Amazing explanation! Thanks for taking the effort!

Thanks for this!

This helped me quite a bit, it explain back prop with a larger network, though there aren’t any numbers: https://cookedsashimi.wordpress.com/2017/05/06/an-example-of-backpropagation-in-a-four-layer-neural-network-using-cross-entropy-loss/?frame-nonce=f08dbcf84b

so how would the backpropagation look in the case of more hidden layers? for instance, if we have 2 hidden layers (1st layer with 2 neurons, second layer with 3), 2 input neurons and 2 output neurons. we want to find dEtotal/dw1. Would you have partial errors for each neuron in the second hidden layer? like dEh3/dneth3*w5+dEh4/dneth4*w7+dEh5/dneth5*w9 ? if so what is the value of each Ehx (x=3 to 5) or just how do you solve dEtotal/dw1?hope it makes sense

Your explanation was so helpful. The penny finally dropped. Thank you.

It helped me a lot for creating my first neural network example. If you have difficulties for implementing your neural network.

Check my example : https://github.com/mlhtnc/deep-learning-example

I have compiled your code and it’s working.

Thanks a lot for clear explanation

Do any body know any source to find a general equations for the feed forward back propagation. So we can apply the model for any number of hidden layers and output layers

Awesome! This post finally help me to code a learning layer only using a matrix library. I am a lazy tweeter but this totally deserves it. Even my source code has a link to this article! https://twitter.com/Corlaez/status/897002103478071297

Matt, What if any is the relationship between your article (nice one just to add) and this YouTube video I found https://www.youtube.com/watch?v=xJkUKKxeYYg? There appears to be a lot in common.

Thank you so much, Matt! It`s the easiest explanation of backprop I have ever come across!

is this a right answer: w1..w8

-0,860918581,-1,807310582,-0,756487191,-1,69622177

-4,934133883,-4,808773679,3,736390466,3,738422962

Error=2,80447E-09

am I right?

Thankyo very much .I have been stumbling around many blogs to see how numerically back propagation work . You blog saved my time . :-)

Thanks for this valuable article. I have a equation. When you update the hidden layers, the following equation seems to be not correct.

\frac{\partial E_{o1}}{\partial out_{h1}} = \frac{\partial E_{o1}}{\partial net_{o1}} * \frac{\partial net_{o1}}{\partial out_{h1}}

I noticed it too. The net_{o1} and out_{h1} should switch

I noticed that too. net_{o1} and out_{o1} should be switched

awesome article to understand this crazy neural network stuff

I’m a beginner in ML. But I’ve learnt that the error function used with logistic (sigmoid) function isn’t convex, So we cannot use gradient descent algorithm due to local optima. So, why did we used it here?

i guess we can still use it. It will converge to local optima. Global optima not guarenteed

Many thanks! This is quite clear in mathematics and functionality.

Really Really helpful for understanding and visualising back-propagation. Beautifully explained and very nicely graphically shown too. Thanks a lot :D

Good and informative post .One comment, the use of symbols in the diagram and the in the paragraphs where you explain the back prop steps are not similar(or I should say a bit different), it will be great if the same symbol can be used,

Reblogged this on Algo Trading.

hi, thank you very much, it ist simplest explanation I found about backprop in net. I could understand all your calculation up to the last point, where you say “After this first round of backpropagation, the total error is now down to 0.291027924”.

how did you come to this number? I think you did new forward pass with new weights and calculated new total error. do i see correct?

Thank you very much Matt, it greatly helped me to find the bug in my code. But your final values are not correct: it should be { 0.011587, 0.988459 } if you did not count first run and { 0.011587, 0.988458 } if you did.

fantastically simple, i understand now !

Really great post, I love how you use the blue blocks to denote the different notation syntaxes of different sources. Very clear!

You are GOD.I was stuck in week5 of Machine Learning course by Andrew.

This comment section should be at top…

Even noting and understanding from your blog took 3 hrs ,I am imagining how much time you would have devoted :)

Danke!

Thanks for such a clear explanation of backprop

Wonderful tutorial. I have a question with the initial calculation in the forward propagation. The calculation performed above states that the input for h1 is (0.15 * 0.05) + (0.2 * 0.1)+bias (weights 1 & 2). However, the graph, I think, shows (0.15 * 0.05) + (0.25 * 0.1)+bias (weights 1 & 3).

Have I looked at the graph incorrectly?

Thanks!

If you look at the first graph, the one without the weight values, you can see that w1 and w2 is weights for h1 and that w3 and w4 are weights for h2. The graph with the values may make us mix up w2 and w3, because of the labels disposition.

Great tutorial, btw :)

I have found here an explanation on why the gradient for the output layer was simply “output – target”. Now, I got it. 1000 thanks for taking time to share :)

Very good explanation with nice example. Thank you very much!!!

Thnaks a ton for such a crystal clear explanation! Made my day :)